Our Investment in definity

The runtime brain for the AI-native lakehouse

We’re proud to partner with Roy Daniel, Ohad Raviv, Tom Bar-Yacov, and the definity team as they build the runtime intelligence layer for the modern data stack.

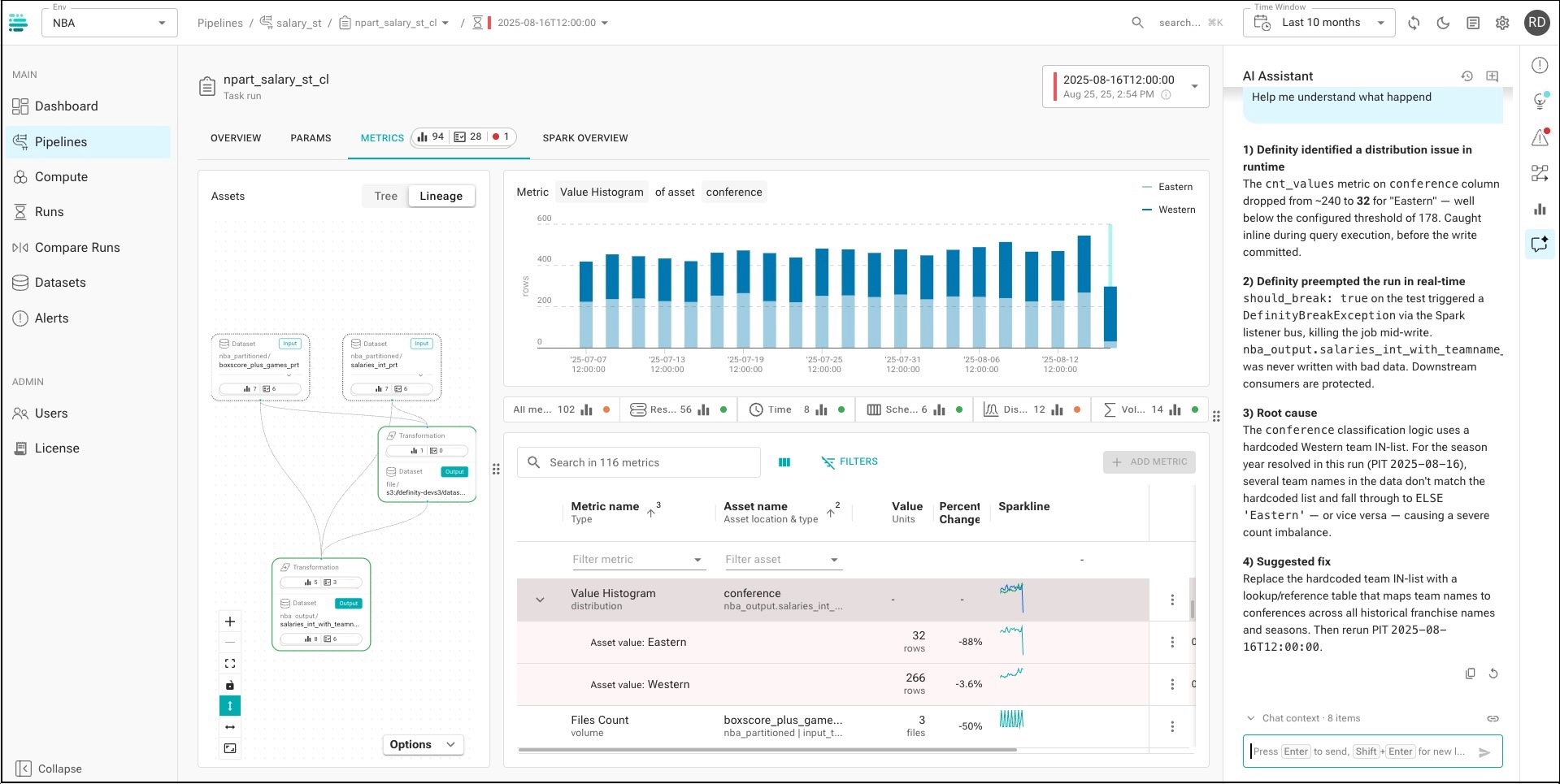

definity helps enterprises see, fix, and eventually automate what happens inside Spark, Databricks, EMR, Dataproc, and on-prem lakehouse environments. Its lightweight agent runs inside the runtime itself, giving teams job-level visibility into cost, performance, reliability, lineage, and risk, then turning that context into specific actions.

The simple idea: data infrastructure should not just tell you something broke. It should know what changed, what it costs, what to do next, and how to fix it safely.

The Black Box at the Center of the Enterprise

The lakehouse has become the operating system for enterprise data. Revenue reporting, fraud models, personalization, ad delivery, risk workflows, and AI applications increasingly depend on mission-critical pipelines running reliably every day. These heavy pipelines are typically run using Spark distributed processing technology.

But the operational layer has not kept up.

Teams can see cloud bills. They can monitor clusters. They can inspect data quality after a table breaks. What they often cannot see is the live behavior of the job itself: why it is slow, why it is expensive, why it is at risk of missing an SLA, or which exact configuration change would make it better.

That blind spot is expensive. Enterprises are dealing with seven- and eight-figure lakehouse estates, 30-50% waste in targeted workloads, and mission-critical jobs that can run for 10+ hours before anyone knows what went wrong.

definity attacks the problem where it actually happens: inside the runtime.

From Dashboards to Decisions

Most tools show symptoms. definity is built to prescribe action.

By sitting inside the Spark driver, definity can understand job execution as it happens: data volumes, freshness, and distribution; pipeline runtime, internal retires, and SLAs; true executor and memory utilization, data skew, and machine configurations; code inefficiencies, execution patterns, and deep lineage; granular job-level cost; and downstream impact – to name some. That gives data engineering teams a new kind of control plane, one that can say: change this configuration, reduce this waste, validate this upgrade, protect this SLA.

That is why the product resonates with sophisticated data teams. It does not ask them to interpret another chart. It gives them a ranked backlog of real savings and reliability opportunities, then proves the impact on the next run.

At DirecTV, definity helped reduce a flagship EMR pipeline from roughly 17 hours to about 4.5 hours. At global AdTech (Nexxen), the company optimized approximately 44 percent of its 50,000-plus-core Spark estate. Superhuman (Grammarly) is boosting developer velocity with auto-optimizations. Other Fortune 500 companies, enterprise teams, and digital natives with large lakehouse environments are seeing the same gap: the lakehouse needs an operator layer.

Why This Moment Belongs to definity

Three shifts make this urgent now.

First, Lakehouse spend has become a board-level issue. When large enterprises spend millions of dollars a year on Databricks, EMR, Dataproc, and Spark, waste is no longer an engineering inconvenience. It is margin pressure.

Second, AI is making the problem harder. More AI-generated code, more pipelines, more experiments, and more automated workflows mean more runtime volatility. The old model, where senior engineers manually tune jobs in Spark UI, does not scale.

Third, agentic data engineering needs a safe execution layer. Agents can write code and propose changes, but they need a trusted runtime context before they can operate production infrastructure. They need to know what is safe, what changed, what will break, and what should be rolled back.

definity is building that runtime context and control layer.

It helps humans, but more importantly, AI agents, understand, optimize, fix, and operate lakehouse pipelines.

The Team Lived the Scar Tissue

We love infrastructure companies founded by people who have felt the pain directly. definity is exactly that.

Roy Daniel (CEO) brings the product and enterprise instinct required to turn a deep technical problem into a business-critical platform. With experience across FIS and McKinsey, Roy has led enterprise-scale data modernization efforts, understands how large organizations buy, how CFOs think about waste, and how data platform leaders justify new infrastructure.

Ohad Raviv (CTO) is the technical center of gravity. A former PayPal data engineering lead and Apache Spark contributor, Ohad knows the runtime inside and out. That matters because definity’s advantage is not a prettier interface. It is architectural: the product sees what external dashboards miss because it sits where the work is actually happening.

Tom Bar-Yacov (VP of R&D) brings the execution muscle. With experience in PayPal data engineering and IDF R&D, Tom helps turn that runtime insight into a product that can deploy quickly, operate in complex enterprise environments, and earn the trust of skeptical platform teams.

Together, the team has the rare mix we look for: deep technical credibility, customer empathy, enterprise urgency, and the discipline to avoid overclaiming. For a company operating inside a mission-critical data infrastructure, that trust is everything.

The Runtime Layer for Autonomous Data

definity starts with a painful wedge: cut lakehouse waste, prevent incidents, and make Spark easier to operate.

But the bigger vision is more important.

As enterprises become AI-native, data infrastructure cannot remain opaque, reactive, and manually tuned. It needs an intelligence layer that understands every job, every cost driver, every SLA risk, and every safe action. It needs a system that can help humans today and guide agents tomorrow.

That is what definity is building.

We’re excited to partner with Roy, Ohad, Tom, and the definity team as they turn the lakehouse from a black box into an intelligent, self-optimizing runtime for the AI era.